|

August 2013

|

August 2013 // Volume 51 // Number 4 // Ideas at Work // v51-4iw1

Using Automated Blogging for Creation and Delivery of Topic-Centric News

Abstract

Every day, news relevant to Extension clientele is posted to the Internet from a variety of sources such as the popular press, scientific journals, and government agency press releases. In order to stay current with the information being published, an end-user must be following all of the possible sources of information. That task can be intimidating and impractical. The development and publication of an automated news-indexing blog provides readers with a single source for daily, even hourly, updates of current events related to a specific topic.

Introduction

A Web-log, also known as a blog, is an electronic commentary or description of events (Kinsey, 2010) that can be quickly updated and published with little expertise in digital communication. Jones, Kaminski, Christians, and Hoffman (2011) have previously described how two blogs are being used for dissemination of turfgrass information. Although blogs can be effective mechanisms for communicating, there is the challenge that the blog's author must keep the information flowing so as not to lose readership.

Information is continuously published electronically on the Internet, and finding that information is becoming easier with the evolution of tools such as Google Search. Perhaps Seger (2011) said it best "The power of Google should not be seen as an obstacle, rather it should be viewed as an opportunity for Extension." When that power of Google Search is harnessed and funneled through other Internet-based tools, it is possible to build a blog that updates itself multiple times each day with little, if any, interaction from the blog's author. Over time, the blog can evolve into a fully automated daily email newsletter, a really simple syndication (RSS) feed, and even automated posts to a Twitter account.

Really simple syndication is a structured data framework that allows end users to select how they wish to view the information provided (Holvoet, 2006). The data, known as an RSS feed, can be formatted for viewing in a Web page, used to populate news browsing software or tweeted to readers. Tweets are the 140-character messages shared over the Twitter social network (Lowe & Laffey, 2011). Other users of Twitter subscribe to an author's Twitter account through a process called "following." When the author subsequently tweets, all of the followers receive the messages in real time.

The Daily Dirt (Crouse, 2012) is an example of an automated news indexing blog that reaches readers through all of the aforementioned mechanisms. This article describes the methodology used to index and deliver topic centric news to more than 230 email subscribers and 1,320 Twitter followers. Cumulatively, since inception in 2009, the items posted to the Daily Dirt have been viewed more than 1,058,000 times.

The Process

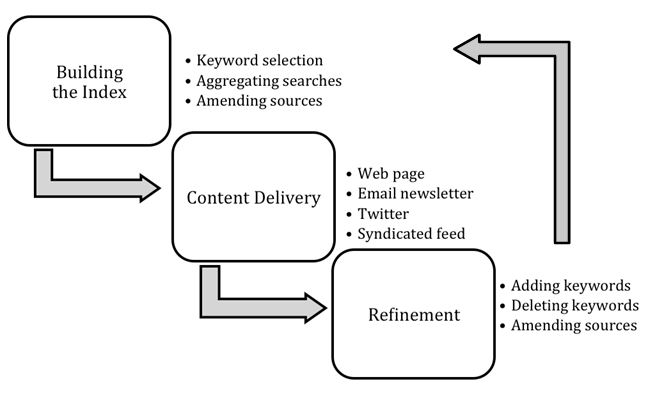

There is a logical stepwise approach to automating a news indexing blog. It begins with the creation of the initial index, proceeds to content delivery, and then to the continual refinement of the keywords in the index (Figure 1).

Figure 1.

A Stepwise Approach to Finding, Delivering, and Refining Information for an Automated Blog

Building the Index

- Selecting Keywords and Storing Searches: To start the automated blogging process, the author selects keywords related to the topic of the blog. For example, keyword combinations used for the Daily Dirt include soil science, soil erosion, and nutrient management and soil geographic information. Using these keywords, Google News (http://news.google.com) is searched for relevant information.

- Aggregating Stored Searches: Following successful searches, the Web addresses of the keyword searches (example http://news.google.com/news?q=%22soil+science%22&output=rss) are captured and stored in Google Reader (http://reader.google.com) as RSS feeds. Reader is a news browser that can aggregate multiple RSS feeds into a single document in much the same way a newspaper selects stories from the Associated Press to construct a single daily publication. Within Reader, all of the feeds are cataloged in a single folder, which allows the aggregation of multiple RSS feeds into a single, new RSS feed.

- Amending Sources: Professional associations, advocacy groups, and government agencies all routinely distribute news through RSS feeds. For example, the Soil Science Society of America (SSSA) routinely publishes news as RSS feeds. Those feeds are stored in Reader and cataloged in the aggregating folder so that as SSSA publishes information it is incorporated into the larger, aggregated feed.

Content Delivery

Once the aggregated RSS feed is constructed the next step is delivery of the news to the end user. One challenge is providing the information in a format that is appealing to the readers, which is why RSS feeds are useful. The feed is nothing more than a stream of data that is easily transformed to a variety of formats.

- Web pages can be constructed automatically from the feed.

- Email newsletters can be delivered to the reader's inbox daily.

- Each item in the feed can be truncated to 140 characters and sent to followers on the Twitter social network.

- The RSS feed can be published in the raw format so readers can use their own news browser software.

The Daily Dirt relies on Google's Feedburner service (http://feedburner.google.com) as the delivery mechanism for the RSS feed. The free service will send out daily emails, post in real-time to Twitter, and make available a Web page for those who wish to read the feed. It also provides a shared RSS feed that can be used in news browsers. Regardless of the mechanism of delivery, all recipients receive the same information.

Continual Refinement

Once initiated, the automated process will need to be monitored and refined to minimize unwanted content. Likewise, a query might need to be changed to further structure the search. For example, in order to limit the scope of the search, the more restrictive phrase "nutrient management" may need to be used instead of two separate keywords "nutrient" and "management." Once the refined query is established, the original, broader query is deleted from Reader and the new RSS feed is stored.

Summary

Blogging about a topic of interest doesn't have to be a daily chore. In fact, by spending a few hours—one time—defining keywords, conducting and storing searches, and then configuring free Web services to distribute the information, sharing news with Extension clientele can become almost fully automated. The only maintenance necessary is the occasional refinement of keyword combinations and perhaps a periodic check to make sure all published products are receiving the news feed from the aggregated searches. Ultimately, the process can result in a large flow of information being viewed by Extension clientele with little human input from the blog author.

References

Crouse, D. (2012). The Daily Dirt. Retrieved from: http://soilscience.info/dailydirt

Holvoet, K. (2006). What is RSS and how can libraries use it to improve patron Sservice? Library Hi Tech News, 23(8), 32-33.

Jones, M. A., Kaminski, J. E., Christians, N. E., & Hoffman, M .D. (2011). Using blogs to disseminate information in the turfgrass industry. Journal of Extension [Online], 49(1) Article 1RIB7. Available at: http://www.joe.org/joe/2011february/rb7.php

Kinsey, J. (2010). Five social media tools for the Extension toolbox. Journal of Extension [Online], 48(5) Article 5TOT7. Available at: http://www.joe.org/joe/2010october/tt7.php

Lowe, B., & Laffey, D. (2011). Is Twitter for the birds? Using Twitter to enhance student learning in a marketing Course. Journal of Marketing Education, 33, 183-192.

Seger, J. (2011). The new digital [st]age: Barriers to the adoption and adaptation of new technologies to deliver Extension programming and how to address them. Journal of Extension [Online], 49(1) Article 1FEA1. Available at http://www.joe.org/joe/2011february/a1.php