February 2008 // Volume 46 // Number 1 // Tools of the Trade // 1TOT2

Key Facts and Key Resources for Program Evaluation

Abstract

Extension educators have been more and more involved in program evaluation. This article gives some tips on different ways to create evaluation, which formats are best, how to ask questions, and how to communicate the results to the stakeholders. This article indicates also several helpful resources to learn more about program evaluation.

When to Do an Evaluation

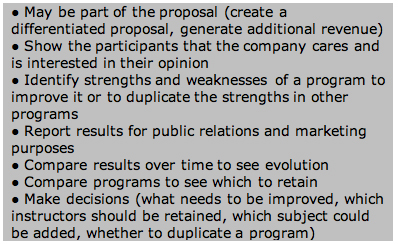

Evaluation is not a useless activity for Extension programs and is increasingly required by funders and universities. Evaluations can serve different purposes, which are summarized in Figure 1 (McNamara, 2007).

Figure 1.

Purposes of Evaluation

Not all programs may require or justify an evaluation (Figure 2). For programs where the outcomes may be negative, Extension staff have to ask themselves whether they want an evaluation, what the objective of the evaluation will be, and how the clients will react (PennState, 2007).

Figure 2.

Situations When Program

Evaluation is Useful

Which Evaluation Method

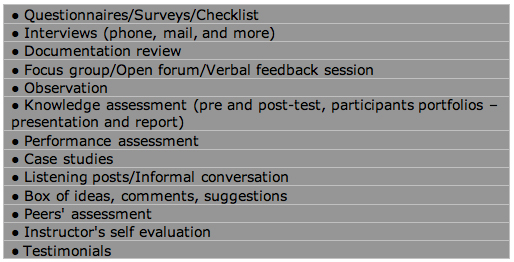

The evaluation method(s) to use will depend on the audience, the type and amount of desired information, and the available resources. There is also a trade-off between quantity and quality. Figure 3 lists methods used to evaluate programs (McNamara, 2007). Several methods can be used to evaluate the program if the budget is available.

Figure 3.

Evaluation Methods

Create the Evaluation Material

Creating the evaluation requires determining the questions of interest and deciding on the answer categories of those questions. The format is also important.

Questions Examples and Answer Categories

To avoid bias in the results, evaluations start with some general questions concerning the overall feeling of the program (program focus, format, relevance). The program dose (depth, frequency, amount/quantity) is also of interest. Several evaluations also include logistics and marketing questions. Evaluations sometimes rate instructors and topics. Some demographic information may also be gathered. It is also important to know what learners think about the delivery method and if they have suggestions (Would there be a more effective way? How would you modify the program in the future?). Finally, questions have to be determined based on what the stakeholders want to know and what the objectives of the program are (PennState, 2007).

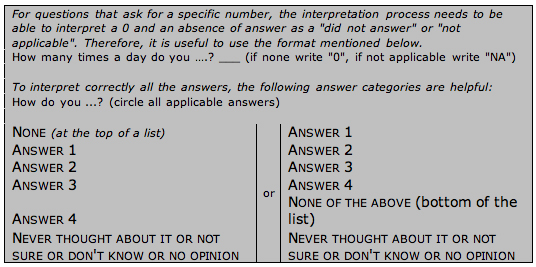

Often, evaluations contain a mix of closed and open-ended questions. Closed questions may ask for a specific answer or may list suggested answers to choose from (Figure 4). Suggested answers should be typed in bold and small caps and include at least four categories. Furthermore, repeating the same set of answer categories (when possible) helps the respondent unless the set is repeated too often (PennState, 2007).

Figure 4.

Suggestions for Correct

Interpretations of Closed Questions

Scales may also be used as answer categories for rating questions. Scale can have a number or word basis. Both have advantages and disadvantages (Table 1), and, therefore, some evaluations use scale numbers with a word interpretation (PennState, 2007).

| Characteristics | Scales with Numbers | Scales with Words |

| Advantages |

|

|

| Disadvantages |

|

|

Ranking questions should be avoided, particularly when many items need to be ranked. Instead, the participant should be asked to determine the main issue or the three main issues in the list (PennState, 2007).

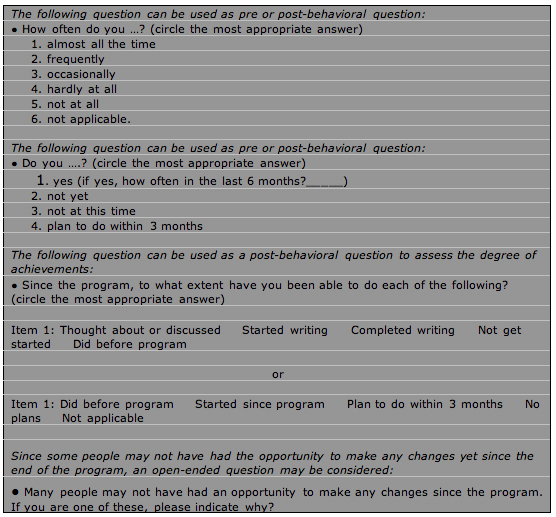

Some programs may have pre-, post-, and post-post program evaluation. Some studies have shown that participants can be more truthful about their pre-program behavior or knowledge if asked after the program. Because participants do not behave consistently, behavioral questions should ask for the frequency, include the word "ever" or propose answer categories not limited to yes or no (Figure 5) (PennState, 2007).

Figure 5.

Examples of Behavioral Questions

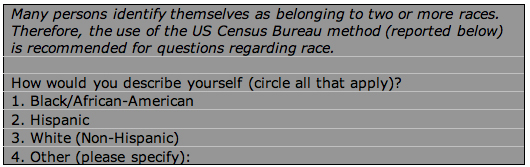

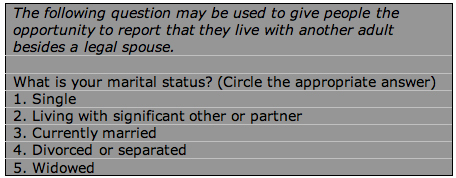

Questions regarding age, race, and marital status are extremely challenging (Figures 6 and 7). Age is a sensitive question and hence should be avoided unless the information is needed. The question should be placed at the end of the questionnaire unless the screening is on age. Age categories should be used (including one higher than the oldest persons of the audience), be relevant to the audience, and be mutually exclusive. If the exact age is needed, asking for the year of birth leads to more accurate answers (PennState, 2007).

Figure 6.

Race Question

Figure 7.

Question Concerning Marital

Status

Close-ended questions should replace open-ended questions if many different answers are likely to be given by the audience. To determine the suggested answers, an open-ended question can be asked the first few times the program is given. An "other" category should also be added to the suggested answer to give the participant the opportunity to add something not included in the list (PennState, 2007).

Format

Survey research demonstrates that design is more important than length to motivate completion. It has to look easy to do and be consistent. Tables are not recommended because they tend to make the evaluation look hard. For open-ended answers, lines should be shortened and centered. Suggested answers should be placed each below the others to give an impression of space (PennState, 2007).

Administering the Evaluation

Before administering the evaluation, it is recommended to pretest the evaluation with a small sample. When the evaluation is administered or advertised, it is necessary to give a rationale (i.e., explain the purpose and the reasons) for the evaluation and show that confidentiality is respected (PennState, 2007).

After the Evaluation

For questions involving numbers, results should be reported on a percentage form for each suggested answer. The mean or the average for each question with numbers should also be mentioned. For open-ended answers, comments should be organized into meaningful categories, and patterns should be identified. If participants are quoted and their names are reported, the participants should have given their consent (McNamara, 2007).

The results should then be used for marketing purposes. Results should also be communicated to the team involved in the program. Positive results can be excellent motivation tools. Results (if requested or positive) should also be shared with the clients or grant funders. This may help generate more grants. Finally, some follow-up may be organized, and some participants may be contacted to get a better understanding of the comments or to ask for their opinion on replacement solutions.

More information on the subject of program evaluation is available at:

http://www.msu.edu/~suvedi/Resources/Evaluation%20Resources.htm

http://www.phcris.org.au/infobytes/evaluation_gettingstarted.php

Also see The Targeted Evaluation Process (Combs, W. L., & Falletta, S. V., 2000).

References

Combs, W. L., & Falletta, S. V. (2000). The targeted evaluation process. American Society for Training and Development.

McNamara, C. (n.d.). Basic guide to program evaluation. Retrieved July 2007 from: http://www.managementhelp.org/evaluatn/fnl_eval.htm

PennState, College of Agricultural Sciences, Cooperative Extension and Outreach. (n.d.). Evaluation tipsheets. Retrieved July 2007 from http://www.extension.psu.edu/evaluation/titles.html